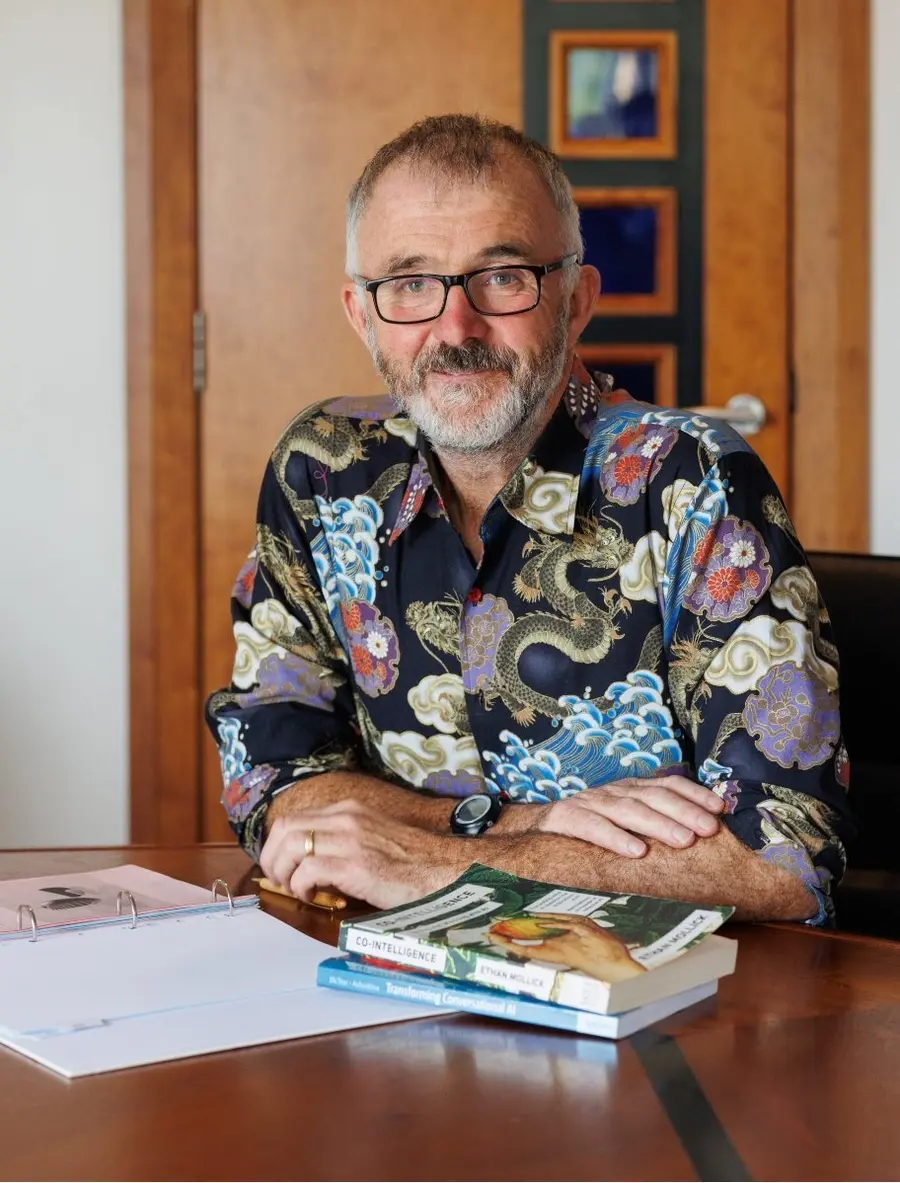

Barry Phillips (CEO) BEM founded Legal Island in 1998. He is a qualified barrister, trainer, coach and meditator and a regular speaker both here and abroad. He also volunteers as mentor to aspiring law students on the Migrant Leaders Programme.

Barry has trained hundreds of HR Professionals on how to use GenAI in the workplace and is author of the book “ChatGPT in HR – A Practical Guide for Employers and HR Professionals”

Barry is an Ironman and lists Russian language and wild camping as his favourite pastimes

This week Barry Phillips argues that AI slop isn’t a technology problem, it’s a human one that is quietly damaging trust, judgement and legal compliance at work.

Transcript:

Hello Humans!

And welcome to the weekly podcast that aims to summarise an important issue in AI for HR in around five minutes. My name is Barry Phillips.

I want to talk today about something that's probably quietly spreading through your organisation right now and it's got a name. It's called AI slop. And if you haven't heard that term yet, trust me, you've almost certainly seen it.

Here's a fact. AI doesn't produce slop in the workplace. Humans do. It's we humans who choose to use it no one else. The AI doesn't hit send. The AI doesn't submit the report, sign off the policy, or paste that wall of text into the all-staff email. A person does. And that distinction? It matters enormously.

So, what are we actually talking about? AI slop is the output you get when someone hands a task entirely to an AI tool no editing, no critical thinking, no human judgment applied and then passes it off as their own finished work.

You know it when you see it. It's the performance review that says an employee "demonstrates a proactive approach to synergising cross-functional deliverables." It's the job description that reads like it was written by a robot because, well, it was. It's the training document that's technically correct, says absolutely nothing useful, and could apply to any company in any industry on the planet.

It's not dangerous because AI wrote it. It's dangerous because nobody checked it and the sender has shown that they don’t care.

In HR, the stakes around this are particularly high. We deal in language that has real consequences: contracts, grievance letters, disciplinary procedures, wellbeing communications. When that language is vague, generic, or just off, people notice. Trust erodes. And in a function built entirely on human relationships, that's a serious problem.

There's also a legal dimension. AI tools can get facts wrong, reflect bias baked into their training data, and produce advice that doesn't comply with your jurisdiction's employment law. If your HR team is copying AI output without review, you may be signing off on things that are simply wrong or worse, unlawful. And for us at Legal Island where we've been doing legal compliance for almost 30 years that’s a big problem.

Right, so what do we do about it? Here are five practical things HR teams and managers can start doing now.

One: Set a clear AI use policy. Not a ban. A framework. People are using these tools whether you have a policy or not, so get ahead of it. Define where AI assistance is appropriate, where it needs human sign-off, and where it shouldn't be used at all think anything personally sensitive or legally significant.

Two: Train people on the difference between a prompt and a finished product. AI output is a first draft. A starting point. Treat it like a junior colleague's rough attempt; useful, but it needs your expertise layered on top.

Three: Make quality visible. In performance conversations and team culture, start talking about the craft of communication. Does this email sound like us? Does this policy actually fit our people? Rewarding thoughtfulness over speed sends a powerful signal.

Four: Build in a human review step for anything and everything that matters. Any document going to employees, any communication touching a sensitive topic it should have a named person who owns it and has actually read it. That sounds obvious. In a lot of organisations right now, it isn't happening.

Recently, I had a bad experience with an airline I used to love and advocate for. I complained. They refunded all of my money. But I’m hurting because throughout the entire process there was no evidence of a single human being on their side acknowledging my pain and the inconvenience they put me to.

Five: Don't shame AI use, redirect it. If you catch someone submitting unreviewed AI output, the conversation isn't "you cheated." It's "this doesn't represent your thinking I need your thinking in here." The goal is judgment, not prohibition.

So, here's where I'll leave you.

The organisations that will use AI well aren't the ones that use it most. They're the ones that use it wisely; where human judgment is still in the room, still asking the hard questions, still caring whether the words on the page actually mean something to the person reading them.

So, the next time you're about to hit send on something AI wrote pause. Read it. Really read it. Ask yourself: does this sound like me? Does this serve the person it's meant for? Is my name on this because I wrote it, or just because I didn't bother to stop it?

That pause? That's the most human thing you can do right now. And it might just be the most important.

Thanks for listening.

Until next week, bye for now!

Get the Most from Copilot (Level 1): A Practical Guide for HR

Get the Most from Copilot (Level 1): A Practical Guide for HR AI Literacy Skills at Work: Safe, Ethical and Effective Use

AI Literacy Skills at Work: Safe, Ethical and Effective Use