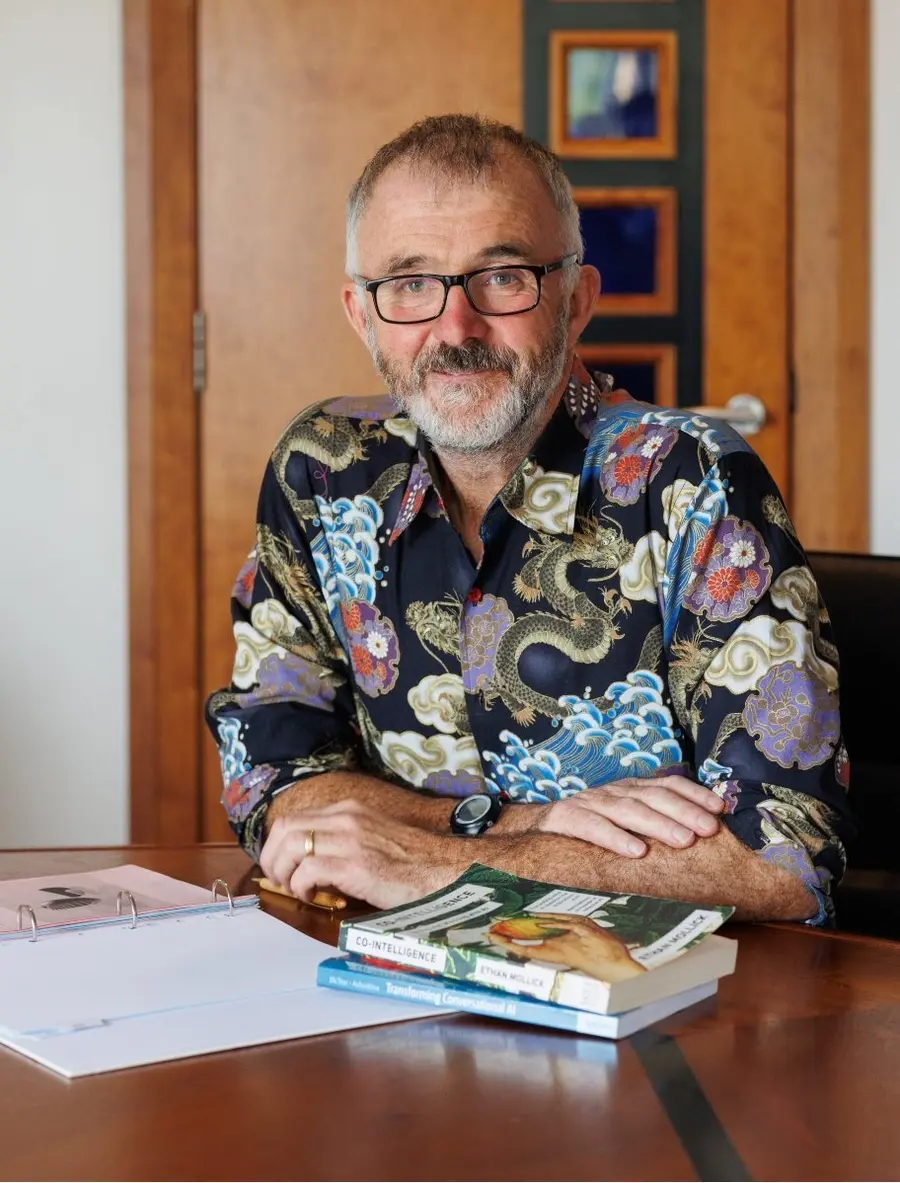

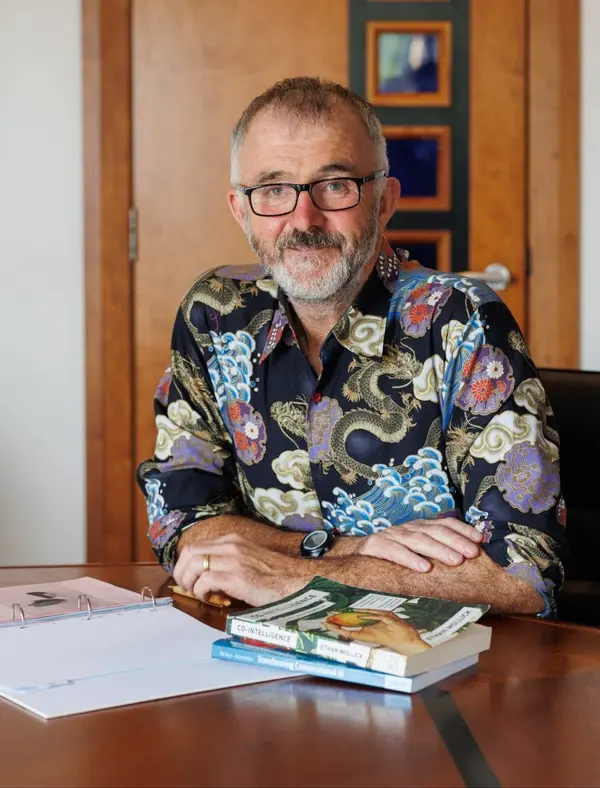

Barry Phillips (CEO) BEM founded Legal Island in 1998. He is a qualified barrister, trainer, coach and meditator and a regular speaker both here and abroad. He also volunteers as mentor to aspiring law students on the Migrant Leaders Programme.

Barry has trained hundreds of HR Professionals on how to use GenAI in the workplace and is author of the book “ChatGPT in HR – A Practical Guide for Employers and HR Professionals”

Barry is an Ironman and lists Russian language and wild camping as his favourite pastimes

This week Barry Phillips makes a cri de coeur and argues for the use at work of one important word that changes everything.

Transcript:

Hello Humans!

And welcome to the weekly podcast that aims to summarise in five minutes or less an important AI issue relevant to the world of HR.

This week let's talk about the three words doing the heaviest lifting in HR right now: "human in the loop."

It sounds comforting, doesn't it? A real person, watching over the algorithm, ready to step in. Reassuring. Regulator-friendly. And completely useless if the person in question is, metaphorically speaking, a monkey.

Because here's the thing. Under the EU AI Act, AI used for recruitment, selection, candidate ranking, performance monitoring, task allocation, promotion, or termination is high-risk. And high-risk means effective human oversight, not theatrical human oversight. The law expects someone who can actually understand the model's output, spot the bias, challenge the recommendation, and stop the system from driving the decision on its own. This is the law due in force in August of this year unless Brussels decides to defer the date. Watch this space.

A monkey can click "approve." A monkey cannot interrogate a shortlisting algorithm that's quietly downranking every candidate called Aisha.

So let's ditch the phrase "human in the loop" and upgrade it to "trained human in the loop." Because the training is the whole ballgame. Your reviewer needs to understand what the tool is doing, what it isn't doing, where it tends to fail and, crucially, they need the authority to override it. Not "raise a concern." Override.

In practice, this means no fully automated rejections. No AI-only ranking deciding who makes the shortlist. No algorithm firing someone on a Tuesday because their keystroke velocity dipped on Monday. What you want is "AI suggests, human decides" with documented review steps, bias checks, and a proper escalation route when the output looks off.

Yes, some narrow admin tools escape this. Sorting, indexing, translation, purely preparatory stuff. But the moment your system is profiling people or predicting their suitability, reliability, or work performance, you're back in high-risk territory. Which covers, let's be honest, most of the shiny tools currently on your procurement shortlist.

So train your humans. Give them the time to actually look. Give them the permission to actually disagree. Otherwise, you haven't built oversight — you've built a liability dressed up as a safeguard.

And now the contrarian bit.

Because here's what nobody in HR wants to say out loud: your trained human in the loop might still be the weakest part of the system. Not because they're a monkey but because they're a human. Tired. Deferential. Susceptible to automation bias. Quietly agreeing with the machine because disagreeing means paperwork, three meetings, and a difficult conversation with Legal.

The real risk isn't that your AI makes a biased decision. It's that your trained reviewer rubber-stamps it anyway — and now the bias has a human signature on it, which is precisely what the law was trying to prevent.

So, yes. Trained human in the loop. Absolutely. Just remember the training never stops.

Because the moment your reviewer becomes comfortable agreeing they've quietly promoted themselves back to monkey.

Thank you for listening

Until next week bye for now

AI Literacy Skills at Work: Safe, Ethical and Effective Use

AI Literacy Skills at Work: Safe, Ethical and Effective Use